Is 0 a Natural Number: Unraveling Math's Greatest Mystery

The question of whether zero is a natural number has puzzled mathematicians and students alike for centuries. This debate, while seemingly trivial, delves into the very foundation of number theory and arithmetic systems. To make sense of this dilemma, we will explore the definition of natural numbers, historical contexts, and mathematical theories to arrive at a clear, user-focused answer.

Understanding Natural Numbers

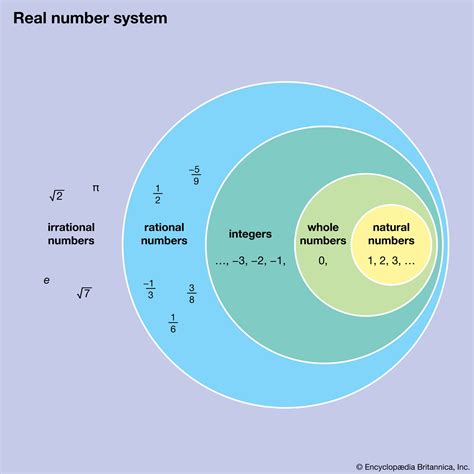

Natural numbers are the set of positive integers starting from 1 and counting upwards: 1, 2, 3, 4, and so on. Traditionally, natural numbers have been defined as the counting numbers. However, the inclusion of zero in the set of natural numbers is where the debate begins.

The Debate: Inclusion or Exclusion?

Historically, different cultures and mathematicians have held varying views on whether zero should be considered a natural number.

On one hand, in ancient Greek mathematics, zero was not considered a number at all, let alone a natural number. Mathematicians like Euclid did not include zero in their system of numbers.

On the other hand, the Indian numeral system, which includes zero as a full-fledged number, has influenced modern arithmetic and number theory. Modern set theory and computer science have embraced zero as a natural number to simplify mathematical expressions and algorithms.

To decode this, we'll dive into the problem-solving aspects, common mistakes, and the essential tips to understand the natural number system.

Quick Reference

Quick Reference

- Immediate action item: Verify the definition of natural numbers in your specific context, especially if you are working within a particular branch of mathematics or computer science.

- Essential tip: In most contemporary mathematical and computational contexts, zero is included in the set of natural numbers.

- Common mistake to avoid: Assuming that the definition of natural numbers is universally consistent without considering the context.

Detailed Explanation: Inclusion of Zero in Natural Numbers

To comprehensively understand why zero is often considered a natural number in modern contexts, let's delve deeper into the reasons behind this inclusion.

In contemporary mathematics, particularly in set theory and number theory, it is common to consider zero as part of the natural numbers for several practical reasons:

- Simplicity and Consistency: By including zero, mathematical definitions and algorithms can be simplified. For instance, the function f(n) = n + 1 for natural numbers n remains consistent when n is zero (i.e., f(0) = 1). This simplifies mathematical proofs and computer programming.

- Unified Arithmetic: Including zero in the natural numbers ensures that arithmetic operations are consistent. For example, the expression 0 + 0 = 0 holds true within the set of natural numbers, maintaining uniformity.

- Peano Axioms: The Peano axioms form the basis for the natural numbers. One of the axioms states that zero is a natural number, and there is a unique successor for each natural number. By including zero, the axioms are simplified and more generally applicable.

The Practical Impact: How This Shapes Modern Mathematics

Understanding whether zero is a natural number has significant implications for both theoretical and practical applications in mathematics and computer science. Here’s a deeper look into how this inclusion shapes modern disciplines:

- Computer Science: In computer programming, zero is often considered part of the natural numbers to simplify data structures, algorithms, and programming languages.

- Mathematics: For mathematicians, including zero in the natural numbers provides a more unified approach to number theory, simplifying proofs and the development of new theories.

- Education: In educational contexts, teaching that zero is a natural number helps students build a clearer understanding of number systems and arithmetic operations.

Detailed How-To Sections

Step-by-Step Guide: Including Zero in the Natural Numbers

Let’s walk through a detailed step-by-step process to help you understand the inclusion of zero in natural numbers:

- Step 1: Understanding the Basic Definition

First, clarify what you mean by natural numbers. Traditionally, natural numbers are the set of positive integers starting from 1. However, contemporary definitions and applications often include zero.

- Step 2: Reviewing Historical Context

Learn about historical perspectives where zero was not considered a natural number and where it was included. For instance, ancient Greek mathematicians excluded zero, while Indian mathematicians included it.

- Step 3: Analyzing Modern Context

Investigate contemporary mathematical and computational frameworks. Many modern definitions and theories include zero as a natural number due to its practical benefits in simplifying arithmetic and algorithms.

- Step 4: Applying the Inclusion

When working through mathematical problems or computer algorithms, apply the modern definition that includes zero as part of the natural numbers.

Detailed Example: Applying the Inclusion in Mathematical Contexts

To understand how this works in practice, let’s go through an example of an arithmetic operation and a programming algorithm.

Arithmetic Example:

Consider the function f(n) = n + 1 defined for natural numbers. If n is 0, applying this function gives us:

f(0) = 0 + 1 = 1. This consistency simplifies the understanding and application of the function across all natural numbers, including zero.

Programming Example:

In a programming language like Python, consider a simple algorithm to find the sum of natural numbers up to a given number:

def sum_of_naturals(n):

total = 0

for i in range(n+1):

total += i

return total

Here, the function sum_of_naturals works correctly even when n is zero. This includes zero in the natural numbers set and ensures the function provides the correct result:

sum_of_naturals(0) = 0, correctly summing the natural numbers from 0 to 0 (which is just 0).

Practical FAQ

Why is there a debate about whether zero is a natural number?

The debate stems from historical perspectives where different cultures and mathematicians had different definitions. Ancient Greek mathematicians excluded zero, while modern mathematics and computer science often include it to simplify definitions and algorithms.

How does including zero as a natural number affect arithmetic operations?

Including zero as a natural number simplifies arithmetic operations and ensures uniformity in mathematical definitions. For example, the function f(n) = n + 1 remains consistent even when n is zero. This maintains the logical structure of number systems.

What are the benefits of including zero in the set of natural numbers in computer science?

Including zero simplifies algorithms and data structures. For instance, counting from zero makes indexing in arrays and lists straightforward. It also makes certain programming tasks more intuitive and easier to implement.

How should educators approach teaching the concept of zero as a natural number?

Educators should clarify that while traditional definitions may exclude zero, contemporary mathematics and computer science include